Thank you for the encouragement! Unfortunately, I'm not able to share the source code just yet, but I do intend to license it under the Apache license as soon as I can. Please refer to this comment for more info: https://programming.dev/comment/2568603

marcelinecramer

I plan on publishing the majority of my project's source code under the Affero GPL v3, but this terminal emulator and other associated projects that could be made independent from my project will be published under Apache.

This is more of a hobby/passion project for me and the other contributors than anything else, so our primary goal is just to make something that's functional and usable to everyone. We don't plan on ever making a profit off of this unless we get the opportunity to keep the copyleft license while doing so. We hope that the AGPL license always lets free software enthusiasts have the opportunity to use our software over existing VR tech stacks like Unity, VRChat, or Neos, which are all non-freedom-respecting, non-self-hosting, and depending on who you ask, very poorly moderated.

However, right now, the project is so early-stage that I'm not comfortable seriously publicly promoting it just yet. Our documentation is currently in a state of disarray and we're trying to find a way to advance the project in our very limited free time.

I've love to share the source code, but we can't just yet. Hold on tight!

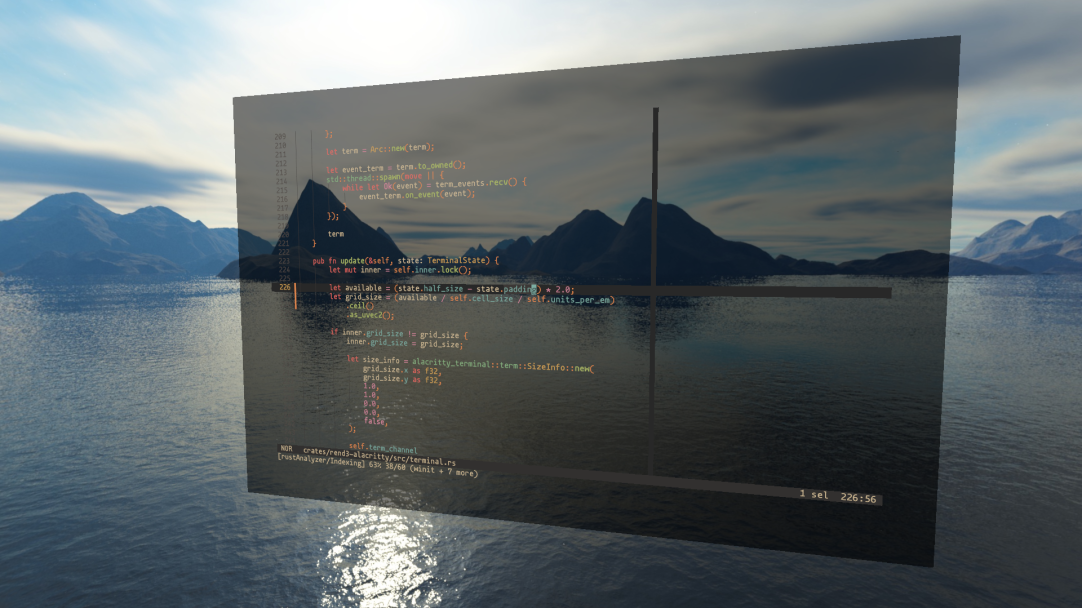

Almost! I'm developing an experimental actor-based VR runtime geared towards supporting hot-reload as a first-class development workflow. The idea is that by letting all functionality, executed as WebAssembly, be added, reloaded, or removed at runtime, it should eventually become possible to develop the space entirely within the space, i.e. without ever needing to remove your VR headset.

A preliminary requirement to that is some "bootstrapping" environment that doesn't need the space to have pre-existing VR tooling for writing new behavior and compiling to WebAssembly. I decided that a terminal emulator had the highest flexibility to time investment ratio based on my experiences building another prototype terminal emulator using alacritty_terminal.

Chances are that the native terminal emulator will come in handy over the entire lifetime of my project's development, given that I and the other contributors are regular modal editor users and from what I know there are currently no self-hosting WebAssembly compilers that could be invoked without some kind of access to the native OS.

It is a VR project! I needed fast, highly-legible text from a variety of viewing angles and MSDFs fit the bill.

I'm curious, when you say a VR framework, do you mean something like Stereokit?

The go-to API to use these days for VR (or XR more broadly) is OpenXR, which is a standard for AR/VR developed by the Khronos Group, who also standardized OpenGL and Vulkan. OpenVR is an older, SteamVR-specific VR library that has been phased out in favor of OpenXR. Oculus also had its own Oculus SDK for developing VR apps for both desktop VR and Quest that has also been deprecated for OpenXR.

When you initialize OpenXR, whichever API loader you've instantiated connects to its compositor, which is typically a daemon process. The OpenXR API has extensions for whether you're rendering using OpenGL, Vulkan, DirectX, whatever, and then you hook into the compositor with your graphics-specific extension to exchange GPU objects for compositing.

OpenXR also handles frame synchronization (which is a tricky subject in VR because of how tight latency needs to be to not give the user motion sickness) and input handling, which is compositor- and hardware-specific.

For Rust's purposes, you can find an example of how to plumb OpenXR's low-level graphics to wgpu here: https://github.com/philpax/wgpu-openxr-example

Once you can follow OpenXR's rules for graphics and synchronization correctly, you're off to the races, and there isn't much of a difference, at least on the lowest level, between your XR runtime and any other game engine or simulator.

Stereokit is really cool because it builds on OpenXR with a bunch of useful features like a UI system and virtual hands rendered where your controllers are. It also supports desktop mode.

If you're interested in this kind of stuff I highly recommend reading the OpenXR specification. Despite it being made by the same consortium that wrote the OpenGL and Vulkan specs, the OpenXR spec is highly-readable and is a really good introduction to low-level VR software engineering.