Best assessment I've heard: Current AI is an aggressive autocomplete.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

I’ve found that relying on it is a mistake anyhow, the amount of incorrect information I’ve seen from chatgpt has been crazy. It’s not a bad thing to get started with it but it’s like reading a grade school kids homework, you need to proofread the heck out of it.

What always strikes me as weird is how trusting people are of inherently unreliable sources. Like why the fuck does a robot get trust automatically? It's a fuckin miracle it works in the first place. You double check that robot's work for years and it's right every time? Yeah okay maybe then start to trust it. Until then, what reason is there not to be skeptical of everything it says?

People who Google something and then accept whatever Google pulls out of webpages and puts at the top as fact.. confuse me. Like all machines, there are failures. Why would we trust that the opposite is true?

At least a Google search gets you a reference you can point at. It might be wrong, it might not. Maybe it points to other references that you can verify.

ChatGPT outright makes shit up and there's no way to see how it came to a given conclusion.

I feel like the AI in self-driving cars is the same way. They're like driving with a 15 year old that just got their learners permit.

Turns out that getting a computer to do 80% of a good job isn't so great. It's that extra 20% that makes all the difference.

Nice one! I have heard it called a fuzzy JPG of the internet.

Over half of tech industry workers have seen the "great demo -> overhyped bullshit" cycle before.

You just have to leverage the agile AI blockchain cloud.

Once we're able to synergize the increased throughput of our knowledge capacity we're likely to exceed shareholder expectation and increase returns company wide so employee defecation won't be throttled by our ability to process sanity.

Sounds like we need to align on triple underscoring the double-bottom line for all stakeholders. Let’s hammer a steak in the ground here and craft a narrative that drives contingency through the process space for F24 while synthesising synergy from a cloudshaping standooint in a parallel tranche. This journey is really all about the art of the possible after all so lift and shift a fit for purpose best practice and hit the ground running on our BHAG.

No SQL, block chain, crypto, metaverse, just to name a few recent examples.

AI is overhyped, but it is, so far, more useful than any of those other examples, though.

Every year sometimes.

Largely because we understand that what they're calling "AI" isn't AI.

This is a growing pet peeve of mine. If and when actual AI becomes a thing, it'll be a major turning point for humanity comparable to things like harnessing fire or electricity.

...and most people will be confused as fuck. "We've had this for years, what's the big deal?" -_-

I also believe that will happen! We will not be prepared since many don't understand the differences between what current models do and what an actual general AI could potentially do.

It also saddens me that many don't know or ignore how fundamental abstract reasoning is to our understanding of how human intelligence works. And that LLMs simply aren't intelligent in that sense (or at all, if you take a tight definition of intelligence).

I think it will be the next big thing in tech (or "disruptor" if you must buzzword). But I agree it's being way over-hyped for where it is right now.

Clueless executives barely know what it is, they just know they want it get ahead of it in order to remain competitive. Marketing types reporting to those executives oversell it (because that's their job).

One of my friends is an overpaid consultant for a huge corporation, and he says they are trying to force-retro-fit AI to things that barely make any sense...just so that they can say that it's "powered by AI".

On the other hand, AI is much better at some tasks than humans. That AI skill set is going to grow over time. And the accumulation of those skills will accelerate. I think we've all been distracted, entertained, and a little bit frightened by chat-focused and image-focused AIs. However, AI as a concept is broader and deeper than just chat and images. It's going to do remarkable stuff in medicine, engineering, and design.

Personally, I think medicine will be the most impacted by AI. Medicine has already been increasingly implementing AI in many areas, and as the tech continues to mature, I am optimistic it will have tremendous effect. Already there are many studies confirming AI's ability to outperform leading experts in early cancer and disease diagnoses. Just think what kind of impact that could have in developing countries once the tech is affordably scalable. Then you factor in how it can greatly speed up treatment research and it's pretty exciting.

That being said, it's always wise to remain cautiously skeptical.

I’s ability to outperform leading experts in early cancer and disease diagnoses

It does, but it also has a black box problem.

A machine learning algorithm tells you that your patient has a 95% chance of developing skin cancer on his back within the next 2 years. Ok, cool, now what? What, specifically, is telling the algorithm that? What is actionable today? Do we start oncological treatment? According to what, attacking what? Do we just ask the patient to aggressively avoid the sun and use liberal amounts of sun screen? Do we start a monthly screening, bi-monthly, yearly, for how long do we keep it up? Should we only focus on the part that shows high risk or everywhere? Should we use the ML every single time? What is the most efficient and effective use of the tech? We know it's accurate, but is it reliable?

There are a lot of moving parts to a general medical practice. And AI has to find a proper role that requires not just an abstract statistic from an ad-hoc study, but a systematic approach to healthcare. Right now, it doesn't have that because the AI model can't tell their handlers what it is seeing, what it means, and how it fits in the holistic view of human health. We can't just blindly trust it when there's human lives in the line.

As you can see, this seems to be relegating AI to a research role for the time being, and not on a diagnosing capacity yet.

It is overrated. At least when they look at AI as some sort of brain crutch that redeems them from learning stuff.

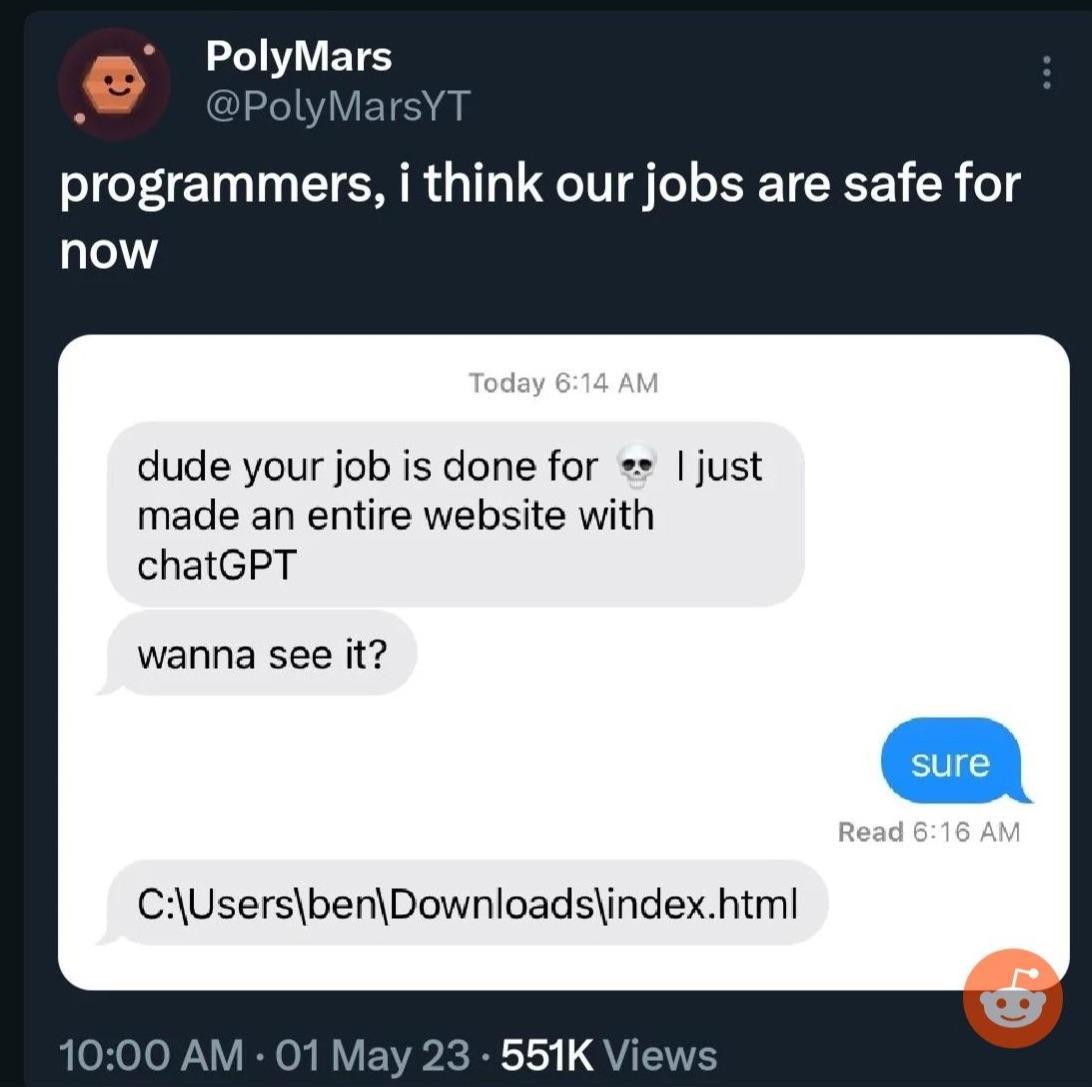

My boss now believes he can "program too" because he let's ChatGPT write scripts for him that more often than not are poor bs.

He also enters chunks of our code into ChatGPT when we issue bugs or aren't finished with everything in 5 minutes as some kind of "Gotcha moment", ignoring that the solutions he then provides don't work.

Too many people see LLMs as authorities they just aren't....

It is overrated. It has a few uses, but it's not a generalized AI. It's like calling a basic calculator a computer. Sure it is an electronic computing device and makes a big difference in calculating speed for doing finances or retail cashiers or whatever. But it's not a generalized computing system that can basically compute anything that it's given instructions for which is what we think of when we hear something is a "computer". It can only do basic math. It could never be used to display a photo , much less make a complex video game.

Similarly the current thing that's called "AI", can learn in a very narrow subject that it is designed for. It can't learn just anything. It can't make inferences beyond the training material or understand. It can't create anything totally new, it just remixes things. It could never actually create a new genre of games with some kind of new interface that has never been thought of, or discover the exact mechanisms of how gravity works, since those things aren't in its training material since they don't yet exist.

Many areas of machine learning, particularly LLMs are making impressive progress but the usual ycombinator techbro types are over hyping things again. Same as every other bubble including the original Internet one and the crypto scams and half the bullshit companies they run that add fuck all value to the world.

The cult of bullshit around AI is a means to fleece investors. Seen the same bullshit too many times. Machine learning is going to have a huge impact on the world, same as the Internet did, but it isn't going to happen overnight. The only certain thing that will happen in the short term is that wealth will be transferred from our pockets to theirs. Fuck them all.

I skip most AI/ChatGPT spam in social media with the same ruthlessness I skipped NFTs. It isn't that ML doesn't have huge potential but most publicity about it is clearly aimed at pumping up the market rather than being truly informative about the technology.

Reality: most tech workers view it as fairly rated or slightly overrated according to the real data: https://www.techspot.com/images2/news/bigimage/2023/11/2023-11-20-image-3.png

Which is fair. AI at work is great but it only does fairly simple things. Nothing i can't do myself but saves my sanity and time.

It's all i want from it and it delivers.

I remember when it first came out I asked it to help me write a MapperConfig custom strategy and the answer it gave me was so fantastically wrong - even with prompting - that I lost an afternoon. Honestly the only useful thing I've found for it is getting it to find potential syntax errors in terraform code that the plan might miss. It doesn't even complement my programming skills like a traditional search engine can do; instead it assumes a solution that is usually wrong and you are left to try to build your house on the boilercode sand it spits out at you.

I have a doctorate in computer engineering, and yeah it’s overhyped to the moon.

I’m oversimplifying it and some one will ackchyually me but once you understand the core mechanics the magic is somewhat diminished. It’s linear algebra and matrices all the way down.

We got really good at parallelizing matrix operations and storing large matrices and the end result is essentially “AI”.

id say its like the dotcom bubble.

yeah its incredible new & emerging tech,

but that doesnt mean it isnt overhyped.

That’s because it is overrated and the people in the tech industry are actually qualified to make that determination. It’s a glorified assistant, nothing more. we’ve had these for years, they’re just getting a little bit better. it’s not gonna replace a network stack admin or a programmer anytime soon.

There is a lot of marketing about how it's going to disrupt every possible industry, but I don't think that's reasonable. Generative AI has uses, but I'm not totally convinced it's going to be this insane omni-tool just yet.

whenever we have new technology, there will always be folks flinging shit on the walls to see what sticks. AI is no exception and you're most likely correct that not every problem needs an AI-powered solution.

I work in AI, and I think AI is overrated.

It is currently overhyped and so much of it just seems to be copying the same 3 generative AI tools into as many places as possible. This won't work out because it is expensive to run the AI models. I can't believe nobody talks about this cost.

Where AI shines is when something new is done with it, or there is a significant improvement in some way to an existing model (more powerful or runs on lower end chips, for example).

Of course, because hype didn't come from tech people, but content writers, designers, PR people, etc. who all thought they didn't need tech people anymore. The moment ChatGPT started being popular I started getting debugging requests from few designers. They went there and asked it to write a plugin or a script they needed. Only problem was it didn't really work like it should. Debugging that code was a nightmare.

I've seen few clever uses. Couple of our clients made a "chat bot" whose reference was their poorly written documentation. So you'd ask a bot something technical related to that documentation and it would decipher the mess. I still claim making a better documentation was a smarter move, but what do I know.

I work in tech. AI is overrated.

In a podcast I listen to where tech people discuss security topics they finally got to something related to AI, hesitated, snickered, said "Artificial Intelligence I guess is what I have to say now instead of Machine Learning" then both the host and the guest started just belting out laughs for a while before continuing.

I'll join in on the cacophony in this thread and say it truly is way overrated right now. Is it cool and useful? Sure. Is it going to replace all of our jobs and do all of our thinking for us from now on? Not even close.

I, as a casual user, have already noticed some significant problems with the way that it operates such that I wouldn't blindly trust any output that I get without some serious scrutiny. AI is mainly being pushed by upper management-types who don't understand what it is or how it works, but they hear that it can generate stuff in a fraction of the time a person can and they start to see dollar signs.

It's a fun toy, but it isn't going to change the world overnight.

Because it is?

On one hard there's the emergence of the best chat bot we've ever created. Neat, I guess.

On the other hand, there's VC capital scurrying around for the next big thing to invest in, lazy journalism looking for a source of new content to write about, talentless middle management looking for something to latch on to so they can justify their existence through cost cutting, and FOMO from people who don't understand that it's just a fancy chat bot.

I use github copilot. It really is just fancy autocomplete. It's often useful and is indeed impressive. But it's not revolutionary.

I've also played with ChatGPT and tried to use it to help me code but never successfully. The reality is I only try it if google has failed me, and then it usually makes up something that sounds right but is in fact completely wrong. Probably because it's been trained on the same insufficient data I've been looking at.