It doesn't help that all the humans have beauty filters on

Yeah, they got that 'no pores' look that selfie filter give, that's somewhat uncanny looking.

Human male 40 absolutely does not have a filter, that's probably why he rated as the most human looking human.

Not only that but several of them are a bit weird looking (sorry to those people...) as in, 37 and 47 have obvious asymmetries, 31 is a bit bug-eyed, 18 seems to have been taken with a super telephoto lens or have a really flat face.

This reminds me of an argument I saw here last week about AI and its use as a grammar checker. You can definitely do it, but you’re going to have all the markers of using AI to cheat.

Not really, if you write the text first and only apply minor changes to fix the grammer (and not rewrite entire sentences) no AI detector will detect that because the sentence structure and pattern wouldn't match typical AI output.

Be sure to remember that, at best, AI takes prompts as interpretable guidelines and a request of “grammar checking” can involve some additional, unwarranted, restructuring. Points to whoever notices both AIisms that I noticed that chatgpt added to my grammar checked critique on grammar checking.

I feel like that "corporate wants you to find the differences between these two photos" meme. Isn't everyone in those photos, in both the top and bottom rows, white?

Edit: Ah, I see, OP has given this a highly misleading title. The "whiteness" of the faces is not actually particularly relevant. In another thread someone summarized what the article is actually about:

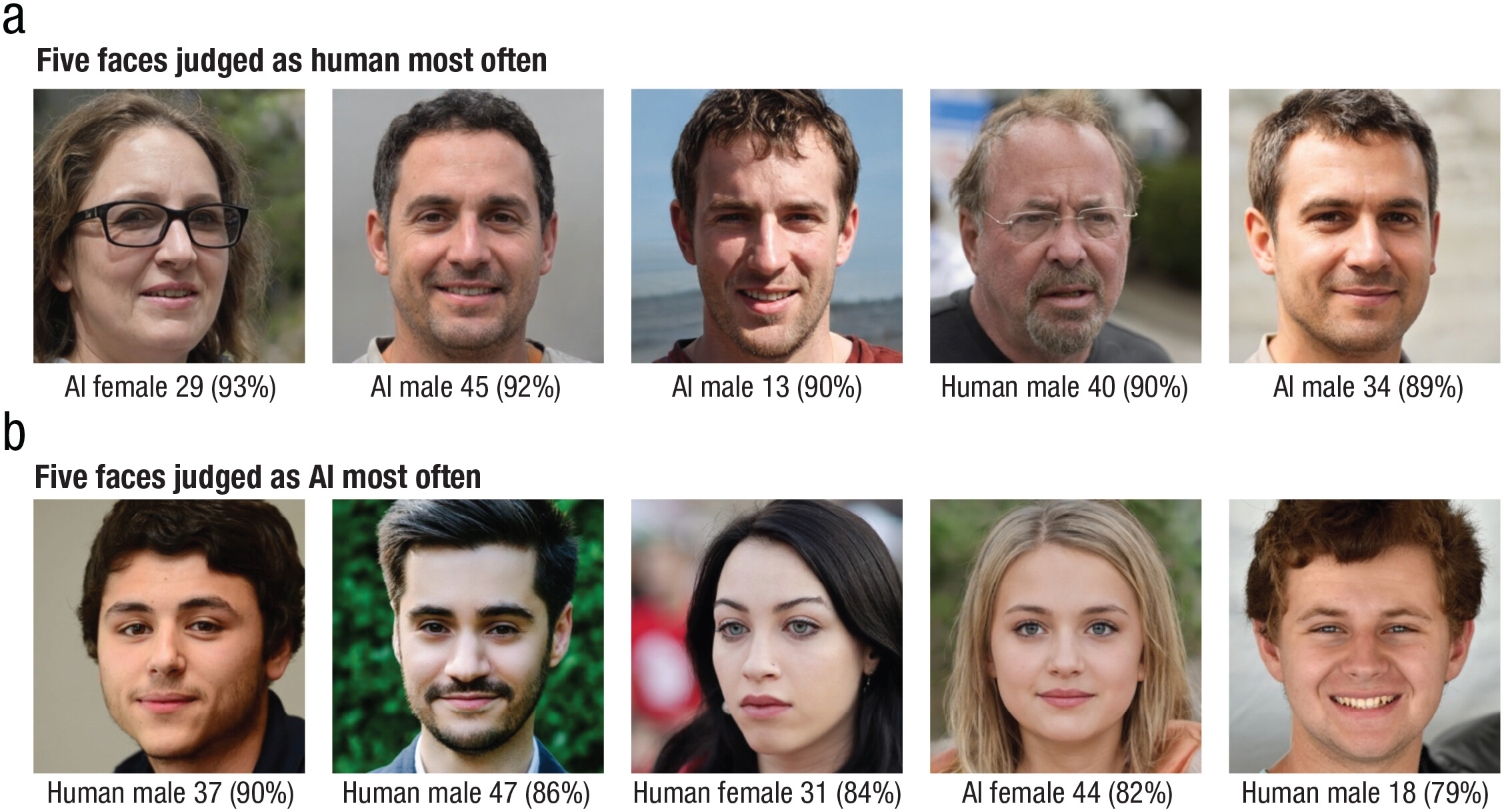

For anyone who doesn’t want to read the paper, they basically took an 60 white men and 60 white women, and showed them a whole bunch of white faces, half of which were generated by AI. It turns out that AI faces were rated as more human-like than actual humans, and they had some hypothesis why. Principally that AI, by its nature, generates images close to “average”, while real people tend to have features that are not “average”. The reason the study focused on white people is that most AI have been trained on white faces, so AI tends to do better with white faces.

An op? Making a misleading title? On Lemmy?

Man, it's as if the severe lack of moderation and rules that so many people wanted when moving from Reddit is hurting the quality of posts on here.

Fr fr nobody ever wrote a clickbait headline on reddit.

Every culture thinks they invented the bag full of bags.

It turns out that AI faces were rated as more human-like than actual humans

I tried guessing from the ArsTechnica article and got a whopping 1 out of 8 correct.

Imagine being one of those people with a face people think is AI generated.

It’s the “living on Null street” problem of the future

Are you seriously going to tell me that human male 47 is real

I do not believe you

His skin is simply too smooth, plus his eyes are at different angles and the perspective is wrong

I feel like real faces were heavily edited, which made them lose a lot of realism. I do get uncanny valley looking at some of them.

Now show us hands.

Not really much of an issue with SXDL any more, and even SD1.5 got quite good at the end. Admittedly haven't stress-tested either, though (things like clasping hands etc). It's also not a hand-specific thing, it just happens more commonly with fingers because they're small features:

The thing that happens is that diffusion-type interference first nails down gross structure (which limb is where) and then fills in details. Sometimes steps somewhere in the middle decide that a limb should be somewhere else, though, and suddenly you have two, and if steps immediately after don't think "that old limb doesn't look like it should be there" and erase it, later steps will happily refine both to photorealism because they don't even look at the overall composition. That is, it's not an issue with anatomical knowledge, or not having seen enough hands, but the model changing its mind but not backtracking. It's actually astonishing how good it can get at not making that mistake without being able to tell that it has two competing goals in mind.

Remember when you could've just look them in the eyes, and if they were in the center it was not a real human?

Such is the miracle of sufficiently trained autoencoders

Creppy to think that none of those faces are real but at the same time do look just like real people.

So.. sidebar. Hang on.

When human simulacra start to approach realism, they go into the “uncanny valley” once they’re pretty good but still obviously off. What’s after the uncanny valley once they’re totally convincing? Is there even a name for that?

It's a valley. Meaning you be either before, in, or after. We are after

Could you add “white” between “actual” and “human”?

I'd be curious to see the process they decided which AI faces and which Real faces they presented. It might be in the paper, but I'm too lazy to read that when I'm 95% convinced I already know the answer.

We used the 100 AI and 100 human White faces (half male, half female) from Nightingale and Farid. The AI faces were generated using StyleGAN2. The human faces were selected from the Flickr-Faces-HQ Dataset to match each of the AI faces as closely as possible (e.g., same gender, posture, and expression). All stimuli had blurred or mostly plain backgrounds, and AI faces were screened to ensure they had no obvious rendering artifacts (e.g., no extra faces in background). Screening for artifacts mimics how real-world users screen AI faces, either as scientists or for public use, and therefore captures the type and range of stimuli that appear online. Participants were asked to resize their screen so that stimuli had a visual angle of 12° wide × 12° high at ~50 cm viewing distance.

I don't know why people (not saying you, more directed at the top commenter) keep acting like cherry picking AI images in these studies invalidate the results - cherry picking is how you use AI image generation tools, that's why most will (or can) generate several at once so you can pick the best one. If a malicious actor was trying to fool people, of course they'd use the most "real" looking ones, instead of just the first to generate

Frankly the studies would be useless if they didn't cherry pick, because it wouldn't line up with real world usage

Tbh I'm more concerned about how they chose the human faces. I can't explain it, but it feels like they were biased toward choosing 'fake-looking' faces, lol

I understand why you're cautious in the "accusation" (don't put too much weight on accusation, it's just the idea I want to convey, not any malicious intent) but in this case, I am saying that cherry picking invalidates the findings, as they are stated.

If the findings were framed around "it's easier to fool people using white AI generated faces", or something similar, I'd be on board with it. The way it sounds right now is "AI generated faces don't have all these artifacts 99% of the time" (I'm paraphrasing A LOT, but you get what I mean.)

The way it sounds right now is "AI generated faces don't have all these artifacts 99% of the time" (I'm paraphrasing A LOT, but you get what I mean.)

The only way it sounds like that is if you don't read the article at all and draw all your conclusions from just reading the title.

Don't get me wrong, I'm sure many do just that, but that's not the fault of the study. They clearly state their method for selecting (or "cherry picking") images

They used a clickbaity title, they'll get clickbaity judgement.

It's also not in their abstract, which is supposed.to contain the most important facts. Their first sentence is about how AI generated faces are indistinguishable. No, they're not. It's like saying "writing random numbers solves any numerical equation", not mentioning that I took a gazillion random numbers and did my study on the ones that matched.

I am uncomfortable to say that I failed 3 of the human ones. In my defense, the guy on the bottom right has pointed teeth like Sweet Tooth

Damn human female 31 and AI female 44 can get it tho.

She looks great for 44.

Kinda makes sense, right?

The AI images are a representation of what an AI thinks a human "should" look like, so when another AI (likely trained on a similar dataset) tries to classify them, the AI images will more closely fit what it expects a human to look like.

This is a classification made by humans, not by AI

Ahh, you'll be unsurprised to hear I didn't actually read the paper. Thanks for correcting me.

That said, I still generally stand by my comment. While that makes this finding much more interesting, it does also make sense that the AI faces look like what our brains recognize as human.

It does make sense indeed. But it also means AI has become very good in matching our expectations. We have reached a level of very good AI

Exactly. The AIs job is to generate humanness. The things that don’t look human get discarded, the things that have strong human indicators get kept. Oh look, the AI did its job. Shocked pikachu.

The white thing is probably just a case of biased training data. Which is going to be a problem across all AIs. I wouldn’t be surprised if in 5-10 years (if the fad lasts longer than NFTs lmao) we find out the ‘AIs’ have all been fed biased data as yet another means of large corporations controlling the narrative of the population.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed