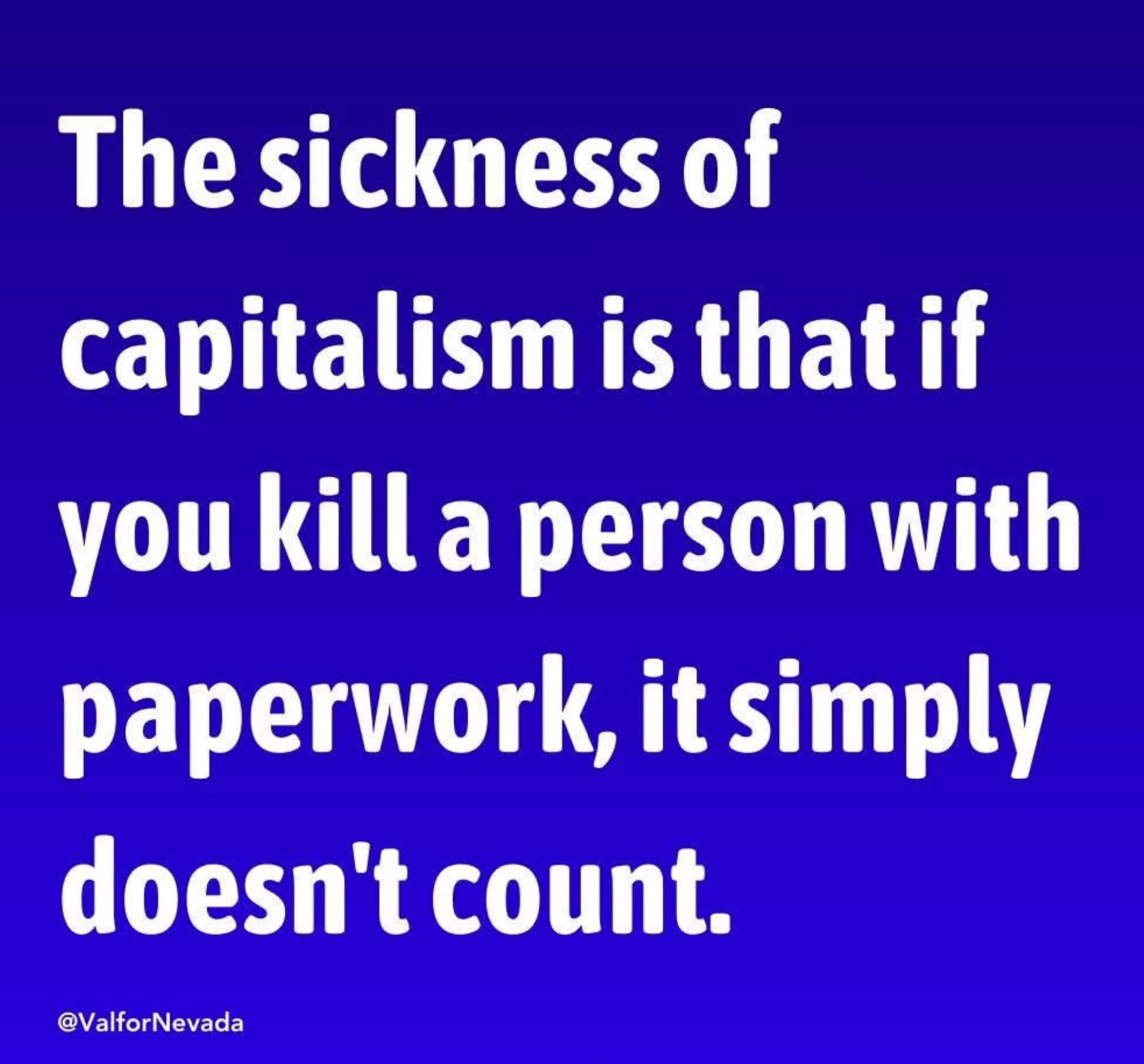

Is it moral for a framework of society to permit one person having more wealth than 1.5 million households while childhood poverty and homelessness runs rampant? 🤔

Political Memes

Welcome to politcal memes!

These are our rules:

Be civil

Jokes are okay, but don’t intentionally harass or disturb any member of our community. Sexism, racism and bigotry are not allowed. Good faith argumentation only. No posts discouraging people to vote or shaming people for voting.

No misinformation

Don’t post any intentional misinformation. When asked by mods, provide sources for any claims you make.

Posts should be memes

Random pictures do not qualify as memes. Relevance to politics is required.

No bots, spam or self-promotion

Follow instance rules, ask for your bot to be allowed on this community.

Nope.

Corporate algorithms don't understand human ethics. If a human in a corporation or their government started making ethical choices they'd quickly lose their job and likely become unemployable.

A lone individual can make moral choices to preserve self (like most of us) OR ethical ones that serve the collective (like Luigi). We cannot have both unless we rise up together.

Workers of the world, unite!

Oh, it's way worse than merely the algorithms.

You see, the algorithms are trained or designed according to the choices of people, the ones selected from the various possibilities to be put in place and used being the ones that people chose to put in place and use, and even after their nasty (sometimes deadly) effects for others have been observed they are kept in use by people.

The Algorithm isn't a force of nature or a entity with its own will, it's an agent of people, and in a company were the people creating the algorithms are paid for and follow other people's orders about how it should be, the people with for whom the Algorithm is an agent are the decision makers.

Deflecting the blame with technocratic excuses (such as that it's the Algorithm) is a very old and often used Neoliberal swindle (really just a Tech variant of rule-makers blaming problems on "the rules" as if there is nothing they can do about it, when they themselves had a saying on the design of those rules and knew exactly what they would lead to)

My point was that the individual is powerless. Your only counterargument is "neoliberal" ad hominem. That's not going to work, particularly not when speaking to a data analyst with more than a decade of experience.

The individual on one side is indeed powerless (or at least it seemed to, until Luigi showed everybody that things aren't quite like that).

However on the other side there are individuals too and they are not powerless and have in fact chosen to set up the system to make everybody else powerless in order to take advantage of it, and then deflect the blame to "the rules", "the law" or "the algorithms", when those things are really just a 2nd degree expression of the will of said powerful individuals.

(And as somebody who worked in making and using Algorithms in places like Finance, algorithms are very much crafted to encode how humans thing they should work - unless we're talking about things done by scientists to reproduce natural processes, algorithms - AI or otherwise - are not some kind of technical embodiment of natural laws, rather they're crafted to produce the results which people want them to produce, via the formulas themselves used in them if not AI or what's chosen for the training set if AI)

My point is not about the point itself that you made, but the language you used: by going on and on about "the algorithm" you are using the very propaganda of the very people who make all other individuals powerless that deflects blame away from those decision makers. That's the part I disagree with, not the point you were making.

PS: If your point was however that even the decision makers themselves are powerless because of The Algorithm, then I totally disagree with it (and, as I've said, I've been part of creating Algorithms in an industry which is a heavy user of things like models, so I'm quite familiar with how those things are made to produce certain results rather than the results being the natural outcome of encoding some kind of natural laws) and think that's total bullshit.

A likely good next step for you would be to study the stock market at least to the nuance of options trading and algorithmic trading. If the dots don't connect then fill the gap with Capital until it does.

Qualifications: Have done some extremely evil shit that I regret.

Most of that time in my career I spent designing and deploying algorithms was in Equity Derivatives and a lot of that work wasn't even for Market Traded instruments like Options but actually OTCs, which are Marked To Model, so all a bit more advanced than what you think I should be studying.

Also part of my background is Physics and another part is Systems Analysis, so I both understand the Maths that go into making models and the other parts of that process including the human element (such as how the definition of the inputs, outputs and even the selection of a model as "working" or "not working needs to be redone" is what shapes what the model produces).

One could say I'm intimately familiar with how the sausages are made, and we're not talking about the predictive kind of stuff which is harder to be controlled by humans (because the Market itself serves as reference for a model's quality and if it fails to predict the market too much it gets thrown out), but the kind of stuff for which there is no Market and everything is based on how the Traders feel the model should behave in certain conditions, which is a lot more like the kind of situation for how Algorithms are made for companies like Healthcare Insurers.

I can understand that if your background is in predictive modelling you would think that models are genuine attempts at modelling reality (hence isolating the makers of the model of the blame for what the model does), but what we're talking about here is NOT predictive modelling but something else altogether - an automation of the maximizing of certain results whilst minimizing certain risks - and in that kind of situation the model/algorithm is entirely an expression of the will of humans, from the very start because they defined its goals (minimizing payout, including via Courts) and made a very specific choice of elements for it to take in account (for example, using the history of the Health Insurance Company having their decision gets taken to Court and they lose, so that they can minimize it with having to pay too much out), thus shaping its goals and to a great extent how it can reach those goals. Further, once confronted with the results, they approved the model for use.

Technology here isn't an attempt at reproducing reality so as to predict it (though it does have elements of that in that they're trying to minimize the risk of having to pay lots of money from losing in Court, hence there will be some statistical "predicting" of the likelihood of people taking them to court and winning, which is probably based on the victim's characteristics and situation), it's just an automation of a particularly sociopath human decision process (i.e. a person trying to unfairly and even illegally denying people payment whilst taking in account the possibility of that backfiring) - in this case what the Algorithm does and even to a large extent how it does it is defined by what the decision makers want it to do, as is which ways of doing it are acceptable, thus the decision makers are entirely to blame for what it does.

Or if you want it in plain language: if I was making an AI robot to get people out of my way whilst choosing that it would have no limits to the amount of force it could use and giving it blade arms, any deaths it would cause would be on me - having chosen the goal, the means and the limits as well as accepting the bloody results from testing the robot and deploying it anyway, the blame for actually using such an autonomous device would've been mine.

People in this case might not have been killed by blades and the software wasn't put into a dedicated physical robotic body but it's still the fault of the people who decide to create and deploy an automated sociopath decider whose limits were defined by them and which they knew would result in deaths, for the consequences of the decisions of that automated agent of theirs.

TIL Death Note is legal.

All Yagami Light had to do was move to the US. Why didn't he just go study overseas? 🤦♂️

Abstraction layers of spreadsheets and accountants, baby. My hands are clean!

sentences a few thousand more people to misery, bankruptcy, or death with the stroke of a pen

How many murders per pivot table did you commit today?

It's called Social Murder for anyone who wants to research this specific idea.

I am imagining Wiley Coyote being crushed by a large bundle of paperwork rather than an anvil or a piano

Death by 1000 paper cuts.

You have to significantly abstract though. It can't have direct cause and effect, just tangents from cause to the effect.

That's hardly unique in capitalism