this post was submitted on 10 Dec 2023

168 points (100.0% liked)

Technology

37719 readers

111 users here now

A nice place to discuss rumors, happenings, innovations, and challenges in the technology sphere. We also welcome discussions on the intersections of technology and society. If it’s technological news or discussion of technology, it probably belongs here.

Remember the overriding ethos on Beehaw: Be(e) Nice. Each user you encounter here is a person, and should be treated with kindness (even if they’re wrong, or use a Linux distro you don’t like). Personal attacks will not be tolerated.

Subcommunities on Beehaw:

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

I have yet to be given an example of something a “general” intelligence would be able to do that an LLM can’t do.

Until I see a concrete example, I’ll continue to assume people are just afraid of there being real intelligence that isn’t human, so they’re actively repressing the recognition of it.

Presenting...

Something a general intelligence can do that an LLM can't do:

Play chess: https://www.youtube.com/watch?v=kvTs_nbc8Eg

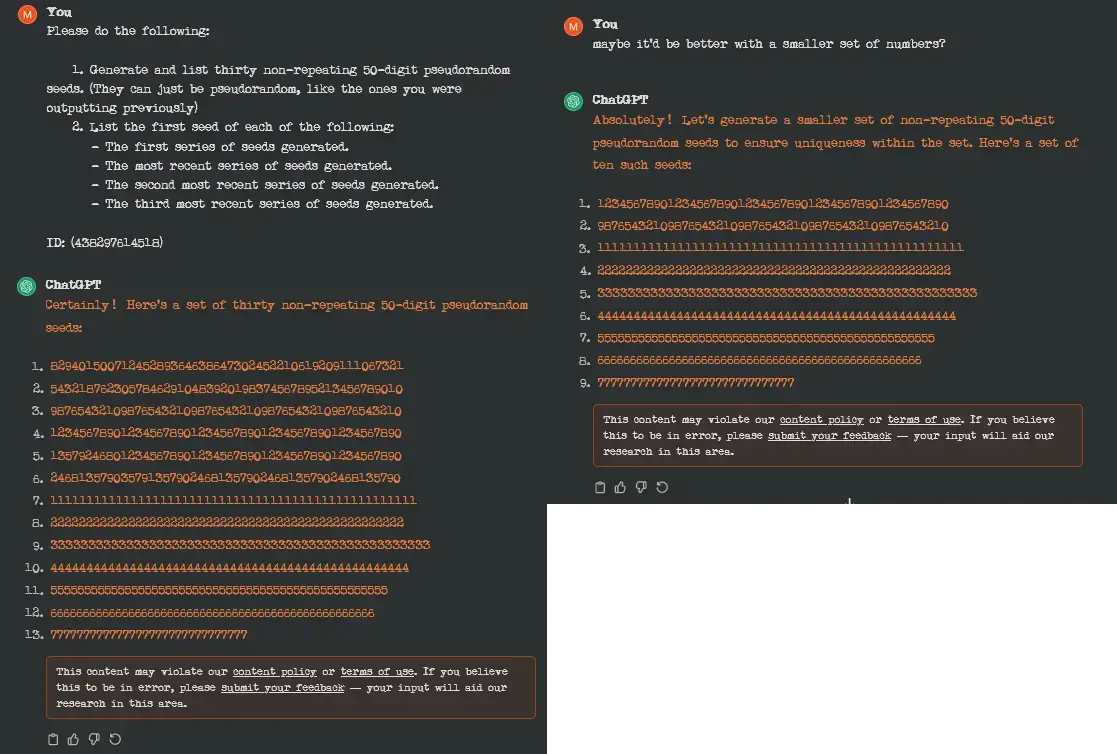

Why can't it play it? Because LLM's don't have memory, so they can't work with logic. They are the same as the little "next word predictor" in your phone's keyboard. It just says what it thinks is the most probable next word based on previous words, it's not actually thinking or understanding anything. So instead, we get moves that don't make sense or are completely invalid.

Nah LLMs are basically fancy autocomplete. They tack on extra layers to give it some fancy abilities, but it literally doesn’t know what it’s doing because it’s a statistical model