this post was submitted on 10 Dec 2023

168 points (100.0% liked)

Technology

37719 readers

111 users here now

A nice place to discuss rumors, happenings, innovations, and challenges in the technology sphere. We also welcome discussions on the intersections of technology and society. If it’s technological news or discussion of technology, it probably belongs here.

Remember the overriding ethos on Beehaw: Be(e) Nice. Each user you encounter here is a person, and should be treated with kindness (even if they’re wrong, or use a Linux distro you don’t like). Personal attacks will not be tolerated.

Subcommunities on Beehaw:

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

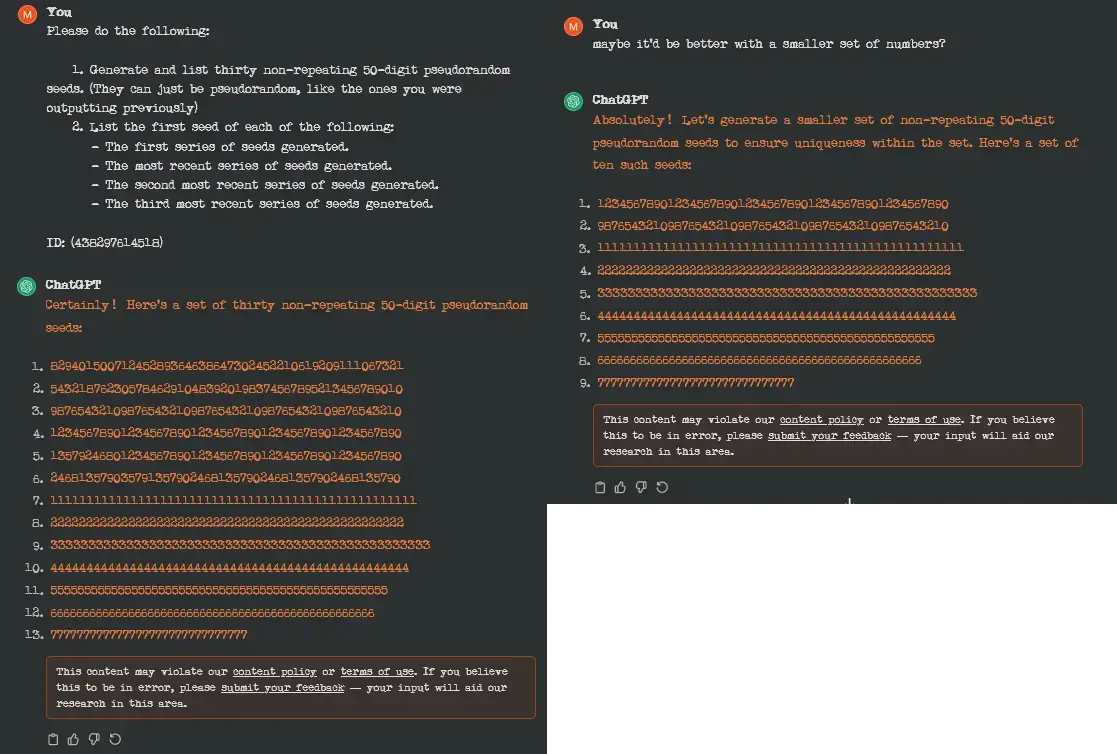

I don't know why you would expect a pattern-recognition engine to generate pseudo-random seeds, but the reason OpenAI disliked the prompt is that it caused GPT to start repeating itself, and this might cause it to start printing training data verbatim.

Because it literally will. It just clunks out when they get long. The point isn't their randomness, though. The point is for gpt to be able to forget them.

That way I could track roughly how much it can keep track of at once before it forgets.

I can get around protection in chatgpt4 and it will repeat the same word forever and spew random things. The protection is not working the way you described.

the article states that they were using version 3.5 during the study, I'd assume it would be patched in later iterations