this post was submitted on 20 Nov 2023

1742 points (98.1% liked)

Programmer Humor

32495 readers

465 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

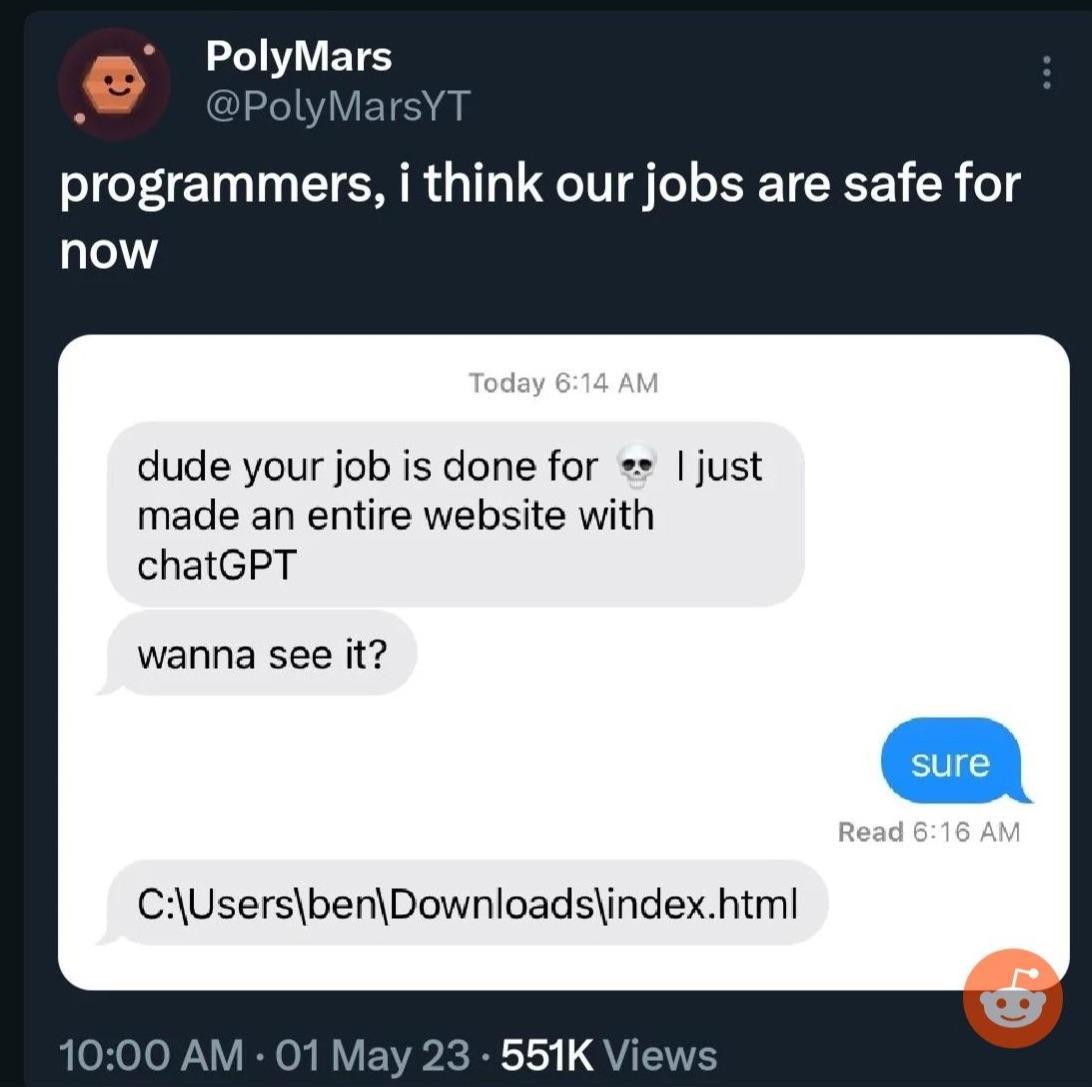

Biggest problem with it is that it lies with the exact same confidence it tells the truth. Or, put another way, it's confidently incorrect as often as it is confidently correct - and there's no way to tell the difference unless you already know the answer.

it's kinda hilarious to me because one of the FIRST things ai researchers did was get models to identify things and output answers together with the confidence of each potential ID, and now we've somehow regressed back from that point

did we really regress back from that?

i mean giving a confidence for recognizing a certain object in a picture is relatively straightforward.

But LLMs put together words by their likeliness of belonging together under your input (terribly oversimplified).the confidence behind that has no direct relation to how likely the statements made are true. I remember an example where someone made chatgpt say that 2+2 equals 5 because his wife said so. So chatgpt was confident that something is right when the wife says it, simply because it thinks these words to belong together.

ChatGPT what is the Gödel number for the proof of 2+2=5?

Of course I don't know enough about the actual proof for it to be anything but a joke but there are infinite numbers so there should be infinite proofs.

there are also meme proofs out there I assume could be given a Gödel number easily enough.